Optimizing Next.js Performance at Scale: A Senior Engineer's Playbook

Performance isn't a 'nice-to-have' — it's a direct driver of revenue and user retention. Google's own research shows that a 100ms increase in page load time reduces conversion rates by up to 7%. Across the Next.js projects I've shipped over the past five years, the same bottlenecks keep surfacing: inflated Total Blocking Time (TBT), poor Largest Contentful Paint (LCP) scores, and hydration waterfalls that make pages feel sluggish even when they technically 'loaded'.

This guide walks through the exact strategies I used to bring the LCP of a high-traffic e-commerce project from 2.4s down to 0.8s — a 67% improvement that directly correlated with a 12% increase in add-to-cart rates. Let's move beyond the basics of 'optimize your images' and dive into architectural patterns available in modern Next.js.

1. The Hidden Cost of Client-Side Hydration

The single most impactful performance lever in any React application is reducing the amount of JavaScript shipped to the client. When React hydrates, it re-executes your component tree on the client side, locking the main thread and making the page unresponsive. This is measured as TBT (Total Blocking Time) and INP (Interaction to Next Paint) — two Core Web Vitals that directly affect your Google search ranking.

The solution isn't just 'less code' — it's 'less client-side code'. React Server Components (RSC) fundamentally change this equation by allowing you to render non-interactive UI on the server, sending only the HTML to the client with zero JavaScript overhead.

“Server Components are not just about data fetching. They are about removing the hydration cost of non-interactive UI elements. A typical page has 80% static content and 20% interactive — why ship JavaScript for all of it?”

Consider a typical e-commerce product page. The hero image, product description, specs table, and reviews are all static content. Only the 'Add to Cart' button, quantity selector, and variant picker need interactivity. With RSC, you can render all the static parts on the server and only hydrate the interactive islands.

// ❌ Bad: Entire page hydrates (ships ~150kb JS)

'use client'

export default function ProductPage({ product }) {

return (

<div>

<ProductHero product={product} />

<ProductSpecs specs={product.specs} />

<ReviewsList reviews={product.reviews} />

<AddToCartButton productId={product.id} />

</div>

);

}

// ✅ Good: Only interactive elements hydrate (~12kb JS)

// This is a Server Component by default (no 'use client')

import { AddToCartButton } from './AddToCartButton'; // 'use client'

import { VariantPicker } from './VariantPicker'; // 'use client'

export default function ProductPage({ product }) {

return (

<div>

<ProductHero product={product} /> {/* Server: 0kb JS */}

<ProductSpecs specs={product.specs} /> {/* Server: 0kb JS */}

<ReviewsList reviews={product.reviews} /> {/* Server: 0kb JS */}

<VariantPicker variants={product.variants} />

<AddToCartButton productId={product.id} />

</div>

);

}In the e-commerce project I mentioned, this single change — converting the product page from a client component to a server component with client islands — reduced the page's JavaScript bundle from 148kb to 11kb (gzipped). TBT dropped from 890ms to under 100ms.

2. Strategic Code Splitting with Dynamic Imports

Even within client components, not everything needs to load immediately. The `next/dynamic` API lets you defer loading of heavy components until they're actually needed. This is particularly effective for below-the-fold content, modals, and rarely-used features.

import dynamic from 'next/dynamic';

// Heavy chart library (~80kb) only loads when user scrolls to dashboard

const AnalyticsChart = dynamic(

() => import('./AnalyticsChart'),

{

loading: () => <ChartSkeleton />,

ssr: false, // No need to server-render a chart

}

);

// Rich text editor only loads when user clicks "Edit"

const RichEditor = dynamic(

() => import('./RichEditor'),

{ ssr: false }

);A common mistake I see: using dynamic imports for everything, including small components. Dynamic imports add a network waterfall — the component only starts loading after the parent renders. For components under 5kb, the overhead of the extra request outweighs the savings. Reserve dynamic imports for components over 20kb or those that require heavy third-party libraries.

3. Advanced Caching with ISR and On-Demand Revalidation

Next.js provides granular control over caching through the fetch API and Route Segment Config. For high-traffic pages, I combine Incremental Static Regeneration (ISR) with on-demand revalidation to serve static pages from the edge (TTFB < 50ms) while keeping content fresh.

The key insight: different data on the same page can have different freshness requirements. Product descriptions change weekly; prices change hourly; inventory status changes every minute. ISR lets you set different revalidation intervals for each data source.

// app/products/[slug]/page.tsx

// Page-level revalidation: rebuild every hour

export const revalidate = 3600;

async function getProduct(slug: string) {

// Product details: revalidate daily

const product = await fetch(`${API_URL}/products/${slug}`, {

next: { revalidate: 86400, tags: [`product-${slug}`] },

});

// Price: revalidate every minute

const pricing = await fetch(`${API_URL}/pricing/${slug}`, {

next: { revalidate: 60, tags: [`pricing-${slug}`] },

});

// Inventory: revalidate every 30 seconds

const inventory = await fetch(`${API_URL}/inventory/${slug}`, {

next: { revalidate: 30, tags: [`inventory-${slug}`] },

});

return {

...await product.json(),

pricing: await pricing.json(),

inventory: await inventory.json(),

};

}For critical updates (price changes, stock-outs), you can also trigger on-demand revalidation from your CMS or admin panel via a webhook:

// app/api/revalidate/route.ts

import { revalidateTag } from 'next/cache';

import { NextRequest, NextResponse } from 'next/server';

export async function POST(request: NextRequest) {

const { tag, secret } = await request.json();

if (secret !== process.env.REVALIDATION_SECRET) {

return NextResponse.json({ error: 'Unauthorized' }, { status: 401 });

}

revalidateTag(tag);

return NextResponse.json({ revalidated: true, tag });

}4. Taming Third-Party Scripts

Marketing tags (Google Tag Manager, Meta Pixel, Hotjar) are the silent killers of web performance. I've seen GTM alone add 500ms+ to TBT because it loads dozens of additional scripts synchronously. The Next.js Script component offers a strategy prop that most developers don't fully leverage.

- strategy='beforeInteractive': Only for truly critical scripts like bot detection or A/B testing frameworks that must run before any rendering. Place in root layout.

- strategy='afterInteractive': Default strategy. Loads after hydration. Use for analytics and tracking pixels.

- strategy='lazyOnload': Loads during browser idle time. Perfect for chat widgets, NPS surveys, and non-critical marketing tags.

- strategy='worker': Offloads script execution to a Web Worker using Partytown. Experimental but extremely effective for heavy analytics.

My recommendation: audit every third-party script with Chrome DevTools' Coverage tab. If a script blocks the main thread for more than 50ms, it's a candidate for lazyOnload or worker strategy. On one project, moving Hotjar and Intercom to lazyOnload reduced TBT by 320ms with zero impact on data collection.

import Script from 'next/script';

export default function RootLayout({ children }) {

return (

<html>

<body>

{children}

{/* Analytics: load after page is interactive */}

<Script

src="https://www.googletagmanager.com/gtag/js?id=G-XXXXX"

strategy="afterInteractive"

/>

{/* Chat widget: load only when browser is idle */}

<Script

src="https://chat-widget.com/embed.js"

strategy="lazyOnload"

/>

{/* Heavy analytics: offload to web worker */}

<Script

src="https://heavy-analytics.com/tracker.js"

strategy="worker"

/>

</body>

</html>

);

}5. Image Optimization Beyond next/image

The next/image component handles responsive sizing and format conversion (WebP/AVIF) automatically. But there are several additional optimizations that make a measurable difference at scale:

- Always set priority={true} on your LCP image (typically the hero or first product image). This triggers eager loading and preconnect hints.

- Use the sizes prop accurately — don't default to 100vw. A product card image that's 300px wide on desktop shouldn't load a 1200px source.

- For user-uploaded images, set minimumCacheTTL in next.config to avoid re-optimizing the same image on every request.

- Use placeholder='blur' with blurDataURL for instant perceived loading. Generate blur hashes at build time or upload time.

- Configure formats: ['image/avif', 'image/webp'] — AVIF delivers 20–30% smaller files than WebP for photographic content.

import Image from 'next/image';

// ✅ Optimized hero image

<Image

src="/hero-product.jpg"

alt="Product name — descriptive alt text for SEO"

width={1200}

height={630}

priority // Preloads as LCP element

sizes="(max-width: 768px) 100vw, (max-width: 1200px) 50vw, 600px"

placeholder="blur"

blurDataURL={product.blurHash} // Pre-generated at upload

/>

// ✅ Lazy-loaded product grid images

<Image

src={product.thumbnail}

alt={product.name}

width={400}

height={400}

sizes="(max-width: 640px) 50vw, (max-width: 1024px) 33vw, 25vw"

loading="lazy" // Default, but explicit for clarity

/>6. Measuring What Matters: A Performance Budget

Optimization without measurement is guesswork. I set up performance budgets at the start of every project and enforce them in CI. Here are the thresholds I target for production Next.js applications:

| Metric | Target | Tool |

|---|---|---|

| LCP | < 1.2s | Lighthouse CI, CrUX |

| TBT / INP | < 200ms | Chrome DevTools, Web Vitals |

| CLS | < 0.05 | Lighthouse CI |

| TTFB | < 200ms (edge) | Vercel Analytics |

| JS Bundle (gzip) | < 100kb first load | next/bundle-analyzer |

| First Load JS | < 85kb shared + < 30kb page | next build output |

I integrate Lighthouse CI into the GitHub Actions pipeline so that every pull request is checked against these budgets. If a PR adds more than 10kb to the first-load JS, it's flagged for review. This prevents the gradual performance regression that plagues most long-lived projects.

Conclusion: Performance Is Architecture

The techniques above aren't micro-optimizations you bolt on at the end — they're architectural decisions you make from day one. Server Components, strategic code splitting, granular caching, and script management are all part of the initial project setup, not a post-launch audit.

In my web development practice, performance budgets are established in the discovery phase and enforced throughout development. The tooling in modern Next.js makes this easier than ever — the hard part is being disciplined about what you ship to the client. If you're wrestling with performance issues on an existing Next.js application, a cloud architecture review can often reveal quick wins in caching and edge deployment before you touch a single component.

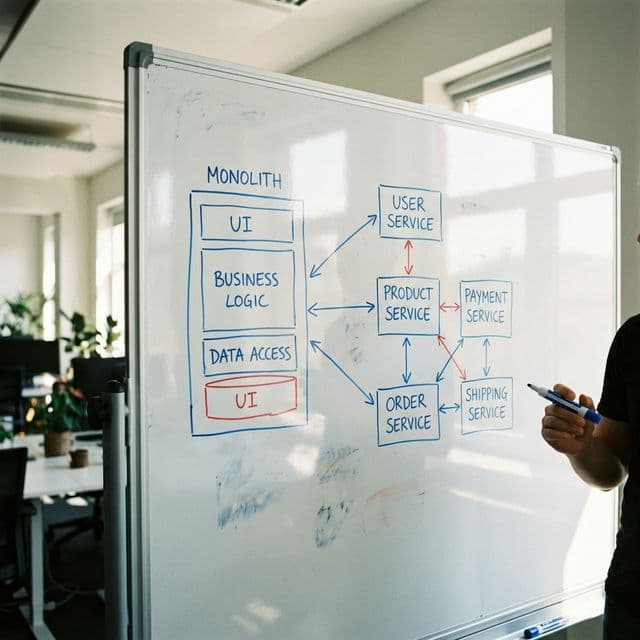

For a broader perspective on how architectural decisions affect long-term maintainability, check out my guide on microservices vs monolith architecture — many of the same principles about avoiding premature complexity apply to frontend performance optimization.

Need help with your project?

Let’s talk about your technical requirements. I offer a free discovery call where we’ll discuss architecture, tech stack, and timeline.

View my services